Zero-Shot to Chain-of-Thought: A Developer's Guide to Writing Effective AI Prompts

D. Rout

April 17, 2026 8 min read

On this page

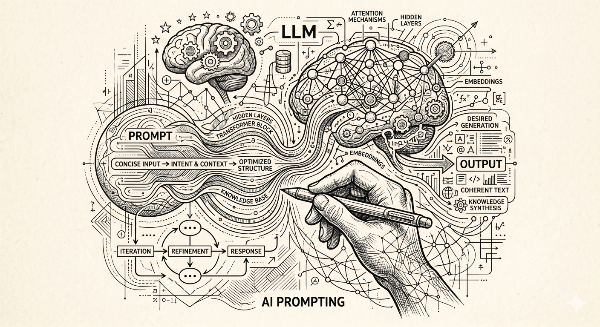

If you have ever stared at a blank AI response and thought, "that's not even close to what I asked for" — the problem almost certainly lives in your prompt, not the model. Prompting is the interface between your intent and the model's output, and like any interface, it rewards deliberate design. In this guide, you will learn the four foundational prompting techniques — zero-shot, one-shot, few-shot, and chain-of-thought — with runnable code samples using the Anthropic Claude API so you can apply them in your own projects immediately.

Prerequisites

Before diving in, make sure you have the following:

- Node.js 18+ installed

- An Anthropic API key (sign up at console.anthropic.com{:target="_blank"})

- Basic familiarity with

async/awaitin JavaScript or TypeScript - The Anthropic SDK installed:

npm install @anthropic-ai/sdk

Set your API key as an environment variable:

export ANTHROPIC_API_KEY="sk-ant-..."

Step 1 — Bootstrap a Reusable API Client

Before writing any prompts, wrap the Anthropic client in a thin helper so all examples share the same setup:

// src/client.ts

import Anthropic from "@anthropic-ai/sdk";

export const anthropic = new Anthropic({

apiKey: process.env.ANTHROPIC_API_KEY,

});

export async function ask(prompt: string, system?: string): Promise<string> {

const message = await anthropic.messages.create({

model: "claude-opus-4-5",

max_tokens: 1024,

system: system ?? "You are a helpful assistant.",

messages: [{ role: "user", content: prompt }],

});

const block = message.content[0];

return block.type === "text" ? block.text : "";

}

Every technique below imports ask() from this file.

Step 2 — Zero-Shot Prompting

Zero-shot prompting means you give the model a task with no examples at all — you rely entirely on its pre-trained knowledge.

When to use it: Simple, unambiguous tasks where the expected format is obvious (translation, summarisation, classification of common categories).

// src/zero-shot.ts

import { ask } from "./client";

async function main() {

const result = await ask(

"Classify the sentiment of this review as Positive, Negative, or Neutral.\n\n" +

"Review: 'The onboarding was smooth, but billing support took three days to respond.'"

);

console.log(result);

// → "Neutral"

}

main();

Pro tip: Add a system prompt to anchor the model's persona and reduce off-topic preamble:

const result = await ask(

"Classify sentiment: 'The onboarding was smooth, but billing support took three days to respond.'",

"You are a sentiment classifier. Respond with a single word: Positive, Negative, or Neutral."

);

Step 3 — One-Shot Prompting

One-shot prompting gives the model a single example before your actual question. That one example is enough to show the expected output format or tone.

When to use it: When you need a specific structure the model might not produce by default — JSON shapes, branded copy style, or specialised naming conventions.

// src/one-shot.ts

import { ask } from "./client";

const prompt = `

Convert a bug report into a structured JSON ticket.

Example:

Input: "The login button doesn't work on Safari 17."

Output: {"title":"Login button broken on Safari 17","severity":"high","browser":"Safari","version":"17","component":"auth"}

Now convert:

Input: "The dashboard chart fails to load when the date range exceeds 90 days."

Output:

`;

async function main() {

const result = await ask(prompt);

console.log(result);

// → {"title":"Dashboard chart fails for date ranges over 90 days","severity":"medium","component":"dashboard","trigger":"date range > 90 days"}

}

main();

Notice how the single example taught the model both the key names and the severity heuristic without you having to write a schema.

Step 4 — Few-Shot Prompting

Few-shot prompting provides 2–5 examples to build a richer pattern. It is especially effective when your task involves edge cases, nuanced tone, or domain-specific vocabulary.

When to use it: Labelling datasets, generating content in a house style, extracting structured data from unstructured text.

// src/few-shot.ts

import { ask } from "./client";

const prompt = `

Extract the action item and owner from meeting notes.

Example 1:

Notes: "Alice will update the README before Friday."

Result: {"action":"Update the README","owner":"Alice","deadline":"Friday"}

Example 2:

Notes: "We agreed that Rohan should set up the staging environment by end of sprint."

Result: {"action":"Set up staging environment","owner":"Rohan","deadline":"end of sprint"}

Example 3:

Notes: "No specific owner — someone needs to migrate the legacy database."

Result: {"action":"Migrate legacy database","owner":null,"deadline":null}

Now extract:

Notes: "Priya will coordinate with design to finalise the new onboarding screens by next Wednesday."

Result:

`;

async function main() {

const result = await ask(prompt);

console.log(result);

}

main();

The three examples collectively taught the model how to handle named owners, fuzzy deadlines, and null values — something a zero-shot prompt would almost certainly miss.

Step 5 — Chain-of-Thought Prompting

Chain-of-thought (CoT) prompting instructs the model to reason step by step before committing to an answer. This dramatically improves accuracy on multi-step problems: maths, debugging, legal reasoning, and architecture decisions.

When to use it: Any task where the final answer depends on multiple intermediate inferences. If you just ask for the answer directly, the model often takes a shortcut and gets it wrong.

// src/chain-of-thought.ts

import { ask } from "./client";

const system = `

You are a senior backend engineer. When solving problems, think step by step.

Show your reasoning before giving your final answer.

`;

const prompt = `

A Node.js API endpoint is returning a 502 Bad Gateway intermittently — roughly 3% of requests.

The service sits behind an NGINX reverse proxy and calls a downstream Postgres database.

CPU and memory are both under 40% utilisation.

Timeouts on the NGINX side are set to 60 seconds.

What are the most likely root causes, and in what order should I investigate them?

`;

async function main() {

const result = await ask(prompt, system);

console.log(result);

}

main();

Forcing step-by-step reasoning explicitly:

const prompt = `

Think through this step by step, then give your final answer.

Question: If a SaaS product charges $29/month and acquires 120 customers in Q1,

losing 8 customers by the end of the quarter, what is the MRR at the end of Q1?

`;

The phrase "think through this step by step" is remarkably effective across all major LLMs and costs you exactly seven words.

Step 6 — Combining Techniques

Real-world prompts rarely fit a single category. Here is a production-grade prompt that layers few-shot examples inside a chain-of-thought wrapper:

const system = `

You are a TypeScript code reviewer. For every review:

1. List the issues you found.

2. Explain the risk each issue poses.

3. Provide a corrected code snippet.

`;

const prompt = `

Here are two example reviews to calibrate your style.

--- EXAMPLE 1 ---

Code:

async function getUser(id) {

return db.query("SELECT * FROM users WHERE id = " + id);

}

Review:

1. SQL injection via string concatenation.

2. Risk: Full database compromise.

3. Fix:

async function getUser(id: string) {

return db.query("SELECT * FROM users WHERE id = $1", [id]);

}

--- EXAMPLE 2 ---

Code:

const token = req.headers.authorization;

Review:

1. No null check before using token.

2. Risk: Runtime crash on unauthenticated requests.

3. Fix:

const token = req.headers.authorization ?? "";

--- NOW REVIEW ---

Code:

export async function deletePost(postId) {

await Post.findOneAndDelete({ _id: postId });

return { success: true };

}

`;

Prompting Technique Comparison

| Technique | Examples given | Best for | Typical token cost |

|---|---|---|---|

| Zero-shot | 0 | Simple, clear-cut tasks | Low |

| One-shot | 1 | Format / style anchoring | Low–Medium |

| Few-shot | 2–5 | Edge cases, domain vocab, labelling | Medium |

| Chain-of-thought | 0–few + reasoning instruction | Logic, debugging, multi-step inference | Medium–High |

| CoT + Few-shot (combined) | 2–5 + reasoning | Complex structured output with reasoning | High |

What's Next

Now that you have the four core techniques down, here are natural next steps to level up your prompting:

- Structured output with JSON mode — Use

response_format: { type: "json_object" }on supported APIs to guarantee parseable output without post-processing. - Prompt chaining — Break complex tasks into a pipeline of smaller, focused prompts where each output feeds the next as input.

- Retrieval-Augmented Generation (RAG) — Combine prompting with a vector database to ground the model's answers in your own documentation.

- Automated prompt evaluation — Write test suites that score model responses against expected outputs so you can iterate on prompts safely.

Further Reading

- Many-shot prompting research — Anthropic

- Prompt engineering guide — OpenAI

- Prompting Guide — DAIR.AI

- Chain-of-Thought Prompting Elicits Reasoning in Large Language Models — Wei et al.

- Learn Prompting — open-source prompting course

- Claude Prompt Engineering Overview — Anthropic Docs

Closing

The techniques in this guide map directly to patterns used throughout the habitualcs.io codebase and the open-source tools built on top of the Claude API. If you want to see them applied in a real content-management workflow — including how prompts drive the Siloscape MCP server — check out the siloscape-mcp repository on GitHub. Star it, open an issue, or send a PR — contributions are very welcome.

Read next

Comments (0)

Join the conversation

Sign in to leave a comment on this post.

No comments yet. to be the first!